Dr. Sean Meyn received the B.A. degree in mathematics from the University of California, Los Angeles (UCLA), in 1982 and the Ph.D. degree in electrical engineering from McGill University, Canada, in 1987 (with Prof. P. Caines, McGill University). He is now Professor and Robert C. Pittman Eminent Scholar Chair in the Department of Electrical and Computer Engineering at the University of Florida and is director of the Laboratory for Cognition & Control. His academic research interests include theory and applications of decision and control, stochastic processes, and optimization. He has received many awards for his research on these topics. Current funding comes from NSF, ARO and Google research.

Dr. Sean Meyn received the B.A. degree in mathematics from the University of California, Los Angeles (UCLA), in 1982 and the Ph.D. degree in electrical engineering from McGill University, Canada, in 1987 (with Prof. P. Caines, McGill University). He is now Professor and Robert C. Pittman Eminent Scholar Chair in the Department of Electrical and Computer Engineering at the University of Florida and is director of the Laboratory for Cognition & Control. His academic research interests include theory and applications of decision and control, stochastic processes, and optimization. He has received many awards for his research on these topics. Current funding comes from NSF, ARO and Google research.

He is a fellow of the IEEE, holds an Inria International Chair, is a IEEE CSS Distinguished Lecturer, and holds an Inria International Chair for collaborations with colleagues in Paris, France.

He has held visiting positions at universities all over the world, including the Indian Institute of Science, Bangalore during 1997-1998 where he was a Fulbright Research Scholar, and sabbatical stays at MIT, Berkeley, Stanford, United Technologies Research Center (UTRC), and Inria Paris.

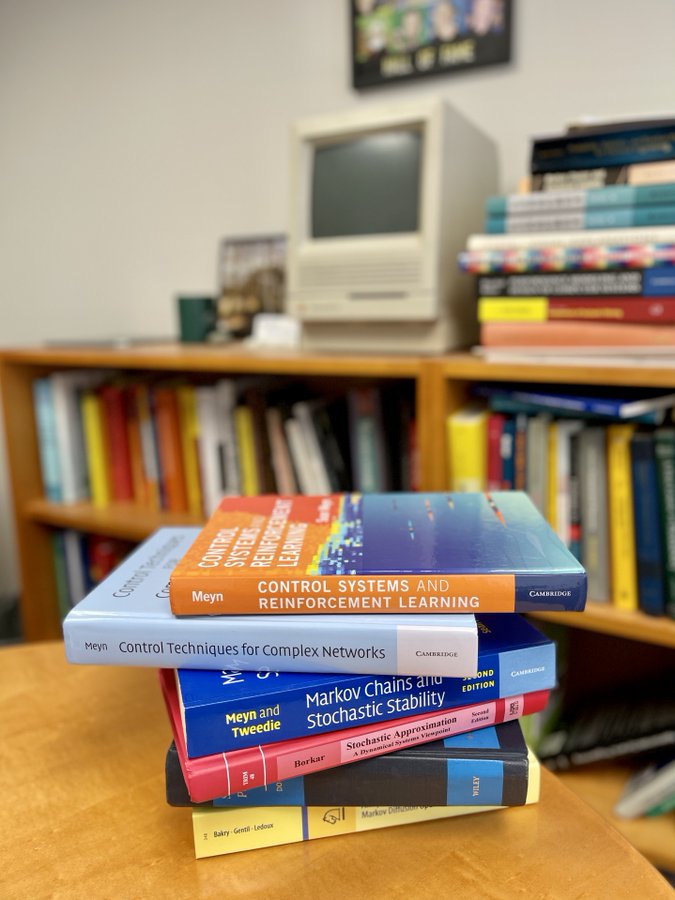

His award-winning 1993 monograph with Richard Tweedie, Markov Chains and Stochastic Stability, has been cited thousands of times in journals from a range of fields. The latest version is published in the Cambridge Mathematical Library. This is a foundation for current research in reinforcement learning.

For the past ten years his applied research has focused on engineering, markets, and policy in energy systems. He regularly engages in industry, government, and academic panels on these topics, and hosts an annual workshop at the University of Florida.

| inControl episode 5 – Sean Meyn

@nccr_automation |